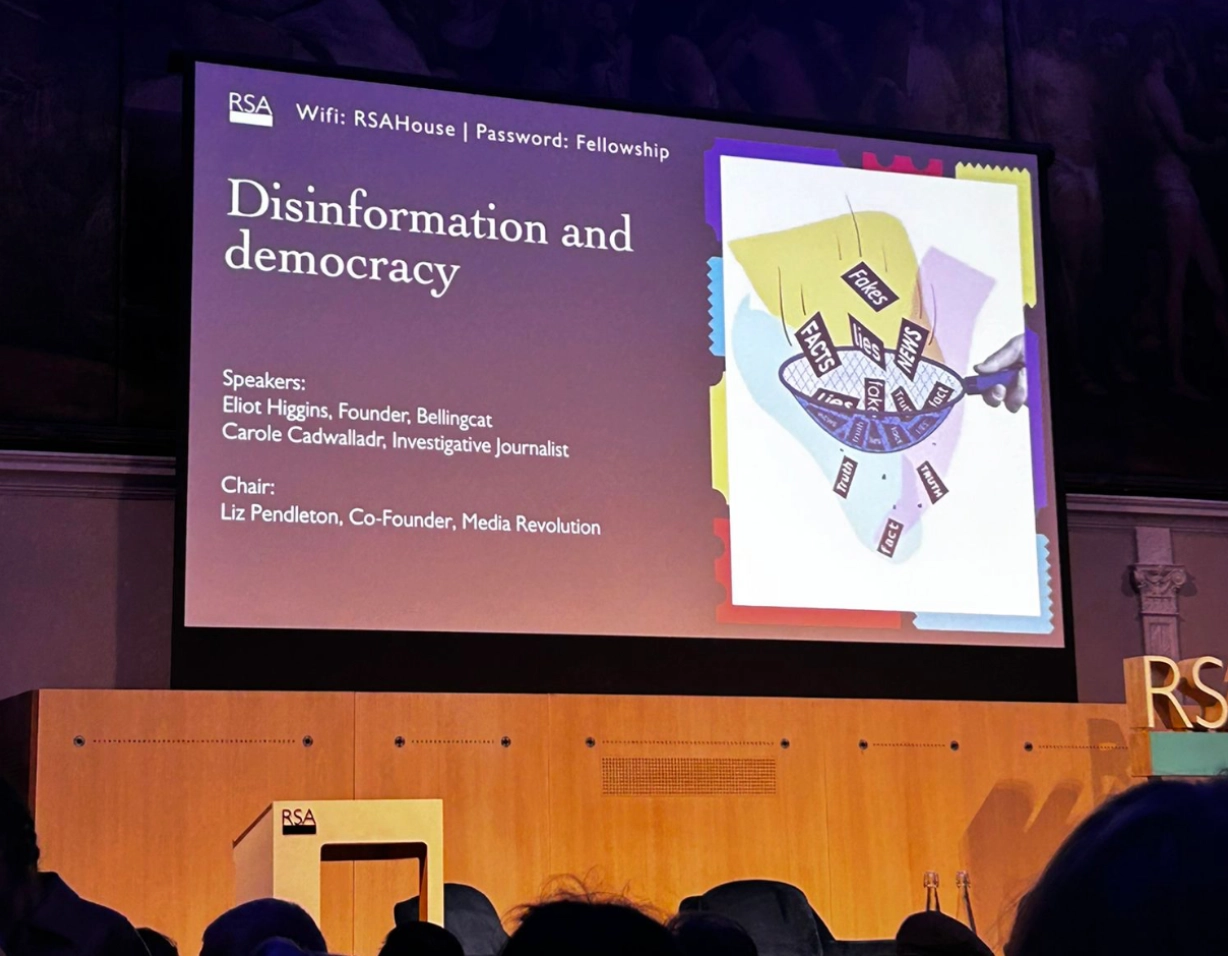

Democracy depends on a shared ability to tell what is true, to debate it in public, and to hold power to account. Given our increasing concern around these themes at TNSP, we would like to reflect on a recent event we attended, and some of its implications. The February 26th panel on disinformation and democracy at the Royal Society of Arts in London (RSA) framed today’s crises as an attack on all three pillars: the verification of truth, meaningful deliberation, and accountability. This was not another narrow discussion about 'fake news', but the positing of a broader argument around the basic wiring of our information system. In other words: who gets to define reality, how we talk about it, and what consequences follow.

To begin the event, Elliot Higgins, OSINT pioneer and founder of Bellingcat, distinguished between three forms of democracy. In what he terms a substantial democracy, truth is tested, a diversity of voices are included, and power is constrained in ways that citizens recognise as legitimate. Institutions are not perfect, but they are at least structurally capable of being corrected. In a performative democracy, the recognisable rituals (i.e elections, press coverage and a representative parliament) remain, yet they are increasingly about staging legitimacy rather than enabling real scrutiny. The most disturbing category is democratic simulation: a method of governance under which the appearance of democracy is carefully maintained, while its core functions are actively inverted. Here, public debate becomes a way to exhaust citizens, media becomes a channel for propaganda, and accountability is replaced by impunity dressed up as transparency.

Central to this erosion is the deliberate targeting of journalists and independent investigators. The examples Higgins discussed ranged from reporters who cannot find work because they are branded “anti‑national”, to outlets losing funding under political and legal pressure. The goal of such attacks is not only to silence individual critics, but to make investigative journalism look risky, marginal, and financially impossible. Once the infrastructure that verifies facts is weakened, the other pillars of democracy begin to rot from within.

Higgins drew on Walter Lippmann’s post‑First World War insight that “the real environment is altogether too big and too complex and too fleeting for direct understanding.” Citizens never experience the world directly, they see it through filters and simplified “pictures in their heads.” For most of the twentieth century, those filters were dominated by a hierarchical media system: a small number of institutional gatekeepers, professional norms of verification, and a largely passive public audience. While not perfect, it did impose some shared reference points about events, facts and timelines. Something which we might not all agree with, but we could definitely all recognise.

Erosion in trust in public institutions and the rise of social media exploded that pyramid. Platforms such as Facebook, which passed the one‑billion‑user mark in the early 2010s, replaced a top‑down information structure with a dense, multidirectional network. Everyone became both producer and consumer; information flowed in real time, and algorithmic amplification rewarded whatever generated strong emotional engagement. This “great information shift” did not just add more voices —it scrambled the filters through which people experience reality. Instead of a limited set of contested narratives, we now have fragmented, personalised worlds, each with its own sense of what is happening, and who is to blame.

Crucially, this digital upheaval landed on a political landscape already scarred by institutional failure. It traced a chain of “crises of the social contract”: the Iraq War, the 2008 financial crash, the dashed hopes of Occupy Wall Street, the annexation of Crimea and war in Ukraine, and of course the Covid‑19 pandemic. In each case, many citizens saw elites make catastrophic decisions and escape meaningful consequences. The result is a corrosive mix of technologically supercharged communication, accompanied by ever‑weaker trust in the institutions that interpret events and enforce accountability. News bulletins may still look much as they did fifteen years ago, yet the authority they once carried has visibly drained away.

As the event's speakers pointed out, within this environment, disinformation is not just a series of false claims that can be corrected with fact‑checking. Panelists were skeptical of the idea that a bad post can simply be debunked via a verification service. In large part, this is because the people most vulnerable to disinformation, i.e. those already alienated, polarised or targeted by manipulative campaigns, are also those least likely to seek out or trust fact‑checks, especially if these are championed by the very institutions that they distrust. By the time a correction appears, the emotional impact and social embedding of the original lie have already run their course. The problem is therefore emblematic of a deeper crisis in how societies produce shared understandings of reality. One consequence is a drift towards extremes and closed epistemic communities. People with genuine grievances or anxieties find comfort in like‑minded groups, where repeated exposure hardens suspicion into certainty. Rather than confronting institutions that have failed them, they turn away from said institutions entirely, embracing conspiratorial narratives that explain everything and are almost impossible to dislodge. The system begins to resemble what the event's second speaker, investigative journalist Carole Cadwalladr called “total information collapse”: a landscape where every claim is contestable, not in the productive sense of democratic debate, but in the sense of a permanent epistemic fog.

Yet the event's conclusions were not purely pessimistic. One proposed response was to strengthen “educational and investigative communities”. These could be interpreted as spaces where people can learn how knowledge is made, and participate in its production. Examples included programmes organised by Bellingcat to foster critical inquiry from primary school onwards, as well as independent investigative units that invite public participation. The impact of crowd‑sourced projects was also highlighted, such as Bellingcat’s investigation into Venezuelan oil smuggling and spillage, conducted through open‑source communities and platforms like Discord. These models do not restore the old information pyramid. Instead, they try to build trust and verification into the new 'networked' reality, by making investigation itself more transparent and collaborative.

The conversation also pushed back against a narrow focus on hostile foreign actors. Russian disinformation campaigns were acknowledged as a serious threat, but the speakers also argued that an underrated danger lies closer to home: the gradual degrading of one’s own headlines. When domestic media systems chase engagement at the expense of accuracy, when political actors knowingly pollute the information environment for short‑term gain, and when democracies normalise the simulation of accountability, they create fertile ground for external manipulation. In that sense, disinformation is less an external virus than an opportunistic infection of a body already weakened by its own contradictions.

After all, the arc of democracy, as the speakers suggested, is not a straight line of progress, but a pattern of improvement, decline, and potential collapse. Whether we remain in the realm of substantial democracy or slide further into simulation will depend on what kinds of information institutions we choose to build next: ones that merely perform the rituals associated with truth, or ones that can distinguish between reality and its counterfeits.